When I was in college, I took a lot of science courses. Really, a lot — maybe even too many. And mostly what they taught me was how scientists believed the world worked — right then, as of the early 1990’s. Luckily for me, my college roommate took a somewhat broader curriculum, and much to my benefit, we talked a lot, late at night (it was college). And somewhere in those discussions, I learned about the idea of a paradigm shift—a fundamental change in the basic concepts and experimental practices of a scientific discipline.

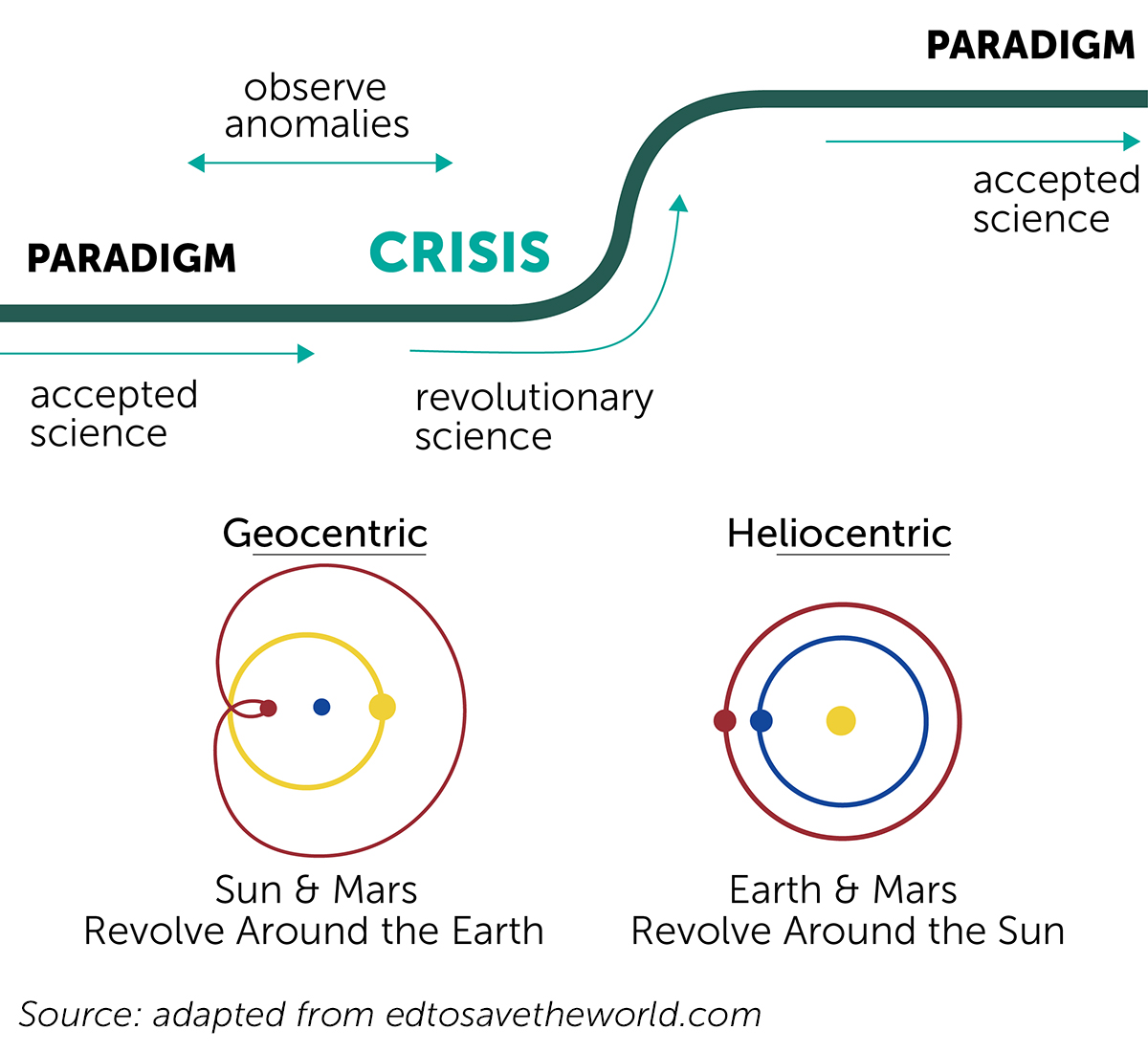

The scientist and philosopher Thomas Kuhn described this as a time where data begins to show that an existing scientific theory cannot fully explain how the world works. This revolutionary science casts theories into doubt, leading to a scientific crisis, which is resolved as a new paradigm emerges that explains the existing and the new data in a unifying framework.

We’re in the middle of one such paradigm shift right now in my field, the science of applied brain plasticity and brain training. The picture above makes it look pretty straightforward. But in reality, that upward sweep called “revolutionary science” can be pretty chaotic. What happens in the middle of a paradigm shift is scientists have to grapple with an old paradigm not explaining new data. Some scientists embrace the challenge and lead the field forward. But others defend the old paradigm to the death.

A new review in APS Psychological Science in the Public Interest entitled “Do ‘Brain-Training Programs’ Work?” shows what defending the old paradigm looks like, and it isn’t pretty. Now, there’s a lot of back-and-forth in my field. Most of it is productive — true exchanges of ideas and best practices between scientists, motivated by the desire to advance the basic science of brain plasticity, and figure out how to apply brain plasticity to real-world human problems — like aging, brain injury, or mental illness. Like any new field, there are many things to figure out — what types of brain-plasticity-based training exercises drive brain change most efficiently and most broadly, what clinical trial designs are most appropriate to answer which hypotheses, and how effective brain training can be delivered to reach the people who can benefit from it.

But, the new APS article takes a very different tack. It asserts that the fundamental premise of brain training is wrong — arguing from theory that brain training hasn’t worked, doesn’t work, and can‘t work. The authors then review over 130 publications of randomized controlled trials of brain training programs, and remarkably, conclude that every single one is irremediably flawed, and that little can be learned from the efforts of hundreds of scientists, years of research, and millions of dollars of NIH funding. A commentary published with the article goes on to suggest that the way forward is backward — that the field should stop all this new-fangled brain plasticity stuff and return to its hundred-year-old roots in mnemonics — coincidentally, the area of expertise of the authors.

This is just silly. And normally, when someone says something a little silly in public, I generally let them go about their business. But in this case, I’m going to make an exception, because it’s important that we as a field push through this turbulent period of change, and get to a new paradigm that integrates all of the new data from brain training science.

Modern Plasticity-Based Brain Training

To understand just why the APS paper is so wrong-headed, we have to start with some background on plasticity-based brain training (for simplicity, I’ll just call it brain training from here on out). This background will take us through a previous paradigm shift — the revolution in brain plasticity, led by Posit Science co-founder and recent Kavli Prize Laureate in Neuroscience, Dr. Michael Merzenich.

When Mike began his scientific work in the late 1960’s, the prevailing paradigm held that the brain was plastic — capable of change — only during childhood, and, that after that, the brain became hard-wired and incapable of change. As a young scientist, Mike performed a set of pioneering experiments showing that the adult brain is plastic. Changing the input the brain received dramatically changed the organization of the brain. For example, training a monkey to make a detailed sensory judgment using its index finger—which of two vibrations was faster — led to plastic reorganization in the structure and function of the monkey’s brain, which directly explained the monkey’s behavioral improvements. Dozens, then hundreds, then thousands of experiments followed — showing brain plasticity across animal and human models, across sensory systems, across young and old conditions.

But this work was controversial. Mike’s research was repeatedly attacked because it did not fit the prevailing paradigm—brains can’t change. One Nobel laureate, who had studied brain plasticity in children, even went so far as to re-run Mike’s experiments in his own lab to prove Merzenich was wrong. However, he found Merzenich was right, and told the world that he was adjusting his theoretical construct to fit the new facts.

Along the way, Mike was able to apply the principles of brain plasticity in a practical way. He was part of a team of researchers at UCSF working on an artificial cochlea — the part of the ear that converts sound waves into electrical inputs to the brain. Damage to the cochlea is a common cause of deafness in children and adults. Mike’s challenge was that the natural cochlea has about 3,000 connection points, which, the conventional wisdom held, made an implant procedure impossible. Mike’s contribution to the project was that he figured out how to do it with a small number of connections. They found the brain’s plasticity would fill in the missing information over time, as it trained itself to differentiate speech inputs. The cochlear implant has been used to restore hearing to more than 300,000 people with deafness — and it works because of brain plasticity.

You’d think that would have settled the matter, but it actually took another 20 years and 1,000’s of peer-reviewed scientific articles to change the scientific paradigm. And it happened — adult brain plasticity is now a core concept in neuroscience, taught in introductory (and advanced) classes, and featured in every major neuroscience textbook.

To Mike Merzenich and other pioneers in applied brain plasticity research, these discoveries offered a message of great hope. With the right regimen of inputs — brain training — the brain could be re-wired in a positive way to improve performance in anyone.

At Posit Science, we’ve built upon this basic science to develop software-based brain training programs. Our reasoning is straightforward — if the basic science of brain plasticity shows that we can make information processing in the brain faster and more accurate, what would happen to a person’s cognitive abilities with a faster and more accurate brain? Well, it turns out that a lot of good things happen. For example, published peer-reviewed studies have shown improvements in standard measures of cognitive function — like attention, memory, and executive function; standard measures of real-world performance — like instrumental activities of daily living and driving safety; and standard measures of overall health and well-being — like health-related quality of life, confidence and mood.

In a way, no one really should be surprised that we can build computerized exercises to improve these elemental systems, nor that they transfer to higher cognitive function. The only real surprise would be if improving the speed and accuracy of brain performance did not lead to better real-world outcomes.

Does this mean that every brain training program drives generalized cognitive benefit? Of course not, and we will always need good clinical trials to inform us about what works and what doesn’t. Programs targeting working memory are likely to have different properties than programs targeting the speed and accuracy of information processing. And some companies have loosely used the term “neuroplasticity” to defend their programs, when no clinical evidence supported their efficacy. But the basic science of brain plasticity teaches us that there is a way to build plasticity-based training programs that will improve brain function, cognitive function, and real-world function.

But while most scientists now accept the idea of lifelong brain plasticity as fact, it is becoming increasingly apparent that a surprisingly large number have not considered the full implications of that fact.

And that takes us to today’s APS paper.

What I Think About the APS Paper

The paper has basic factual errors, it reflects that the authors minds were made up before they began their review, it works very hard to dismiss the observed facts in order to avoid changing outdated theories, it proposes methodologies that will hobble scientific progress, and it fundamentally does not value the lives of older people.

Let’s have a look.

There are basic factual errors in the data review

I first read the section on the IMPACT study, since I am a co-author, and I know the study extremely well. I found two major factual errors in the first few paragraphs. These are errors that that even a cursory reading of the study’s methods section should have prevented. First, the reviewers criticize IMPACT for not having an a priori primary outcome measure (meaning that the investigators pre-specified a single outcome measure in the data analysis plan to avoid statistical problems from multiple comparisons) — the only problem is that IMPACT did have an a priori primary outcome measure, as described on the very first page of the paper. Second, they claim that IMPACT altered its data analysis procedures between the first and second publication — but exactly the same analytical methods were used in both papers, again clearly described in the methods sections. I think it’s quite likely that authors of the other studies discussed in the manuscript would find a similar pattern of factual errors. When you can’t get the basic facts right, the review of studies becomes garbage in, garbage out.

The pattern of errors highlights the pre-conceived notions

These factual errors may seem small — but what they highlight is that the authors come to their review with an unshakeable belief that these studies are wrong, and they’re not going to let the actual study designs or data get in their way. IMPACT was a prospective, randomized, controlled, double-blind, parallel arm trial — the gold standard. Follow-on studies confirmed and extended the results. When confronted with these excellent studies, the authors simply dismiss the data, saying things like “the results represent fairly narrow transfer.” Well, that’s a matter of opinion. Another way to look at those results is that they are “breakthrough results that show generalization to real-world measures.” I can only think that if these authors met a talking dog, they would probably complain that its vocabulary wasn’t big enough.

No study can ever be good enough

Towards the end of the manuscript, the authors lay out a set of clinical trial best practices, which I agree with and support. But the devil is always in the details. For example, one of the recommendations describes the value of placebo controls. And of course, placebo controls are valuable! Many of the studies they review use all sorts of good placebo controls — in fact some of them are so good, they may have some limited value, and, thus, we generally call them “active controls” — including adult education courses, brain games, crosswords, internet training, video games — the list goes on. But not a single one of these placebo controls is good enough to meet the authors’ theoretical standards — that the control needs to be somehow both indistinguishable from the intervention, yet also not designed to improve cognitive function. This is not a question of setting the bar high — it’s a question of actively impeding scientific progress by inventing a bar that no study can meet.

Oddly, the authors own studies don’t appear to meet their own criteria. One point that caught my attention is pre-trial registration — a practice I strongly support in which clinical trials are formally described before they begin to enroll participants, to prevent the lack of publication of negative results and inappropriate changes to data analysis plans. You can look me up at at clinical trials.gov — you’ll see my pre-registered trials. Look up a few key authors from the new manuscript. I can’t find a single one. Here are two examples of published trials that should have been pre-registered — but as far as I can tell, they weren’t. Why would a person propose standards for others that they themselves do not meet?

No group of studies can ever be good enough

Science advances when you look at the weight and direction of the evidence across studies. And, in fact, several recently published meta-analyses, including Lampit 2014 and Kueider 2012, conclude that certain kinds of brain training work. But in the current APS article, the authors criticize each individual study, but fail to acknowledge that as a group, the studies often reinforce each other’s conclusions and fill in the limitations they cite. For example, the authors discuss problems that can emerge from multiple hypothesis testing — essentially, if a study evaluates 20 measures, one will be statistically significant by chance alone. That’s a valid concern, and individual studies can be legitimately criticized on this basis. But the authors don’t point out that multiple studies confirm the same results over and over again. For example, speed training has been shown to generalize to timed instrumental activities of daily living — a real-world, directly observed functional performance measure — in four (1,2,3,4) different randomized controlled trials. The authors can’t see the forest for the trees.

Conflicts of interest

It’s appropriate to mention that the authors of the manuscript are themselves entangled with the brain training industry — their conflict of interest disclosure mentions their work evaluating brain training products, running brain training studies that never published, contributing to the design of commercial brain training products with limited evidence of efficacy, and designing games to evaluate cognition. Their effort to throw out the past twenty years of research should be carefully considered in that light.

Moral considerations

They spend an entire page making the argument that reducing crash rates in older drivers is irrelevant, because older drivers don’t crash very often. That’s pretty callous thinking. Older adults are more likely to suffer a car crash, more likely to be seriously injured in a car crash, and more likely to die in a car crash. Brain training reduces crashes in older drivers — in randomized controlled trials, and in real-world field evaluations. A person in health sciences who argued that we shouldn’t reduce heart attacks because heart attacks are rare would be rightfully drummed out of the profession; and a person in the health sciences who argues that we shouldn’t address issues with serious health consequences, because a majority of people are not likely to have the issue, are taking a morally indefensible position. I realize the studies create inconvenient facts for the argument that training cannot generalize, but what kind of person would make the argument that saving the lives of older drivers just doesn’t matter?

This is What Happens When One Paradigm Topples Another

The authors are all psychologists. Yet most of the papers they discuss deeply involve the field of neuroscience and the sub-specialty of applied neuroplasticity. The science of brain plasticity plays a minimal role in the body of the text — there’s no discussion of the actual science of brain change. That’s an important disconnect. There are literally thousands of studies showing that training drives structural, functional, and chemical changes in the brain. It’s as if these authors have locked themselves away for the past thirty years, blissfully undisturbed by advances in modern neuroscience. Of course, many psychologists have not only embraced these advances but have driven very important discoveries based on them. But the tone of this article shows what it must feel like to be left behind as science moves ahead.

An Invisible Gorilla

Dr. Daniel Simons, the lead author of the paper, is best known for his work on the psychology of attention. In a beautiful and fun experiment, he showed that if he directs a viewer’s attention to the details of a visual scene — in the original experiment, he asked a viewer to watch a team of basketball players passing a ball, and count how many passes were made — that the viewer will miss the most important aspect of the scene (i.e., that a gorilla walks right through it!).

It’s a great demonstration — no clinical trial needed. The entrepreneurial Dr. Simons has turned this demonstration into two web sites, a series of blog posts, a best-selling book, and a well-paid corporate speaking career arranged by the BrightSight group.

Reading the APS paper is like seeing the invisible gorilla experiment in action. The authors carefully direct the reader’s attention to a truly impressive number of details, and ask the reader to count and track everything, as they ricochet between the limitations of individual studies. But in doing so, they lead the reader to miss the gorilla in the room — that since brain plasticity can change the brain, and the brain is responsible for cognitive function, brain plasticity can change cognitive function. That’s the gorilla walking through the more than 70 pages of the APS article. But thanks to Dr. Simons’ prior work, we should be alert to the trick.

I work at (and own a small piece of) Posit Science, where we develop, test, and provide a brain training program — BrainHQ. I work here because my grandfather passed away from Alzheimer’s disease, and we need to bring new science to bear on this and many other brain-based disorders. Among other radical beliefs that pose a conflict of interest with the manuscript I discuss in this article, I believe that brain plasticity has consequences for human behavior, and that preventing car crashes in senior drivers is a worthwhile goal.

Excellent post, thank you. I will be using it to facilitate discussion in my classroom.

Great article

Prevent those car crashes

Vic

How do I determine the best program

I have been on Lumosity

Thanks for the analysis. I just read the WSJ article. They did acknowledge that there can be improvement in eye hand coordination which is a problem for me. A small point but nice.

Are you familiar with HealthNewsReview.org–feedback@healthnewsreview.org?

I suggest you send your comment to them for inclusion in the U. of Minnesota quality in journalism star series.

I tend to agree with the WSJ article that one is able to get better at particular exercises by playing them often.I don’t know if i am just getting better at playing the exercise or actually helping my brain.The exercises that I believe are most rewarding are exercises such as Right Turn and Mind Bender. These exercises require one to not only deduce the proper solution, but to communicate very quickly to one’s hand to select the proper solution. I often see the correct solution, but am not able to choose it fast enough, very frustrating. To really see how much co-ordination is required in these exercises, attempt to play them using the non-dominant hand.

Dear Henry,

I read your blog on Brain Training and its Critics with great interest and much emphatic nodding of my head!

I am as passionate about the work I do as you obviously are about yours – I do Neuro Linguistic Programming (NLP) Therapy, empowering people to achieve rapid, lasting change in any mind issue that is not useful for them.

My colleagues and I in New Zealand face similar limiting beliefs from the majority of psychologists, CBT Therapists, counsellors and others with vested interests in maintaining the existing paradigm. These are the people who have the influence with the powers-that-be who are in control of public funding, meaning that NLP work is not funded and many people are missing out on its benefits.

In parallel with the paper to which you refer, some Psychologists in debunking NLP quote a meta-study that fails to correctly identify the purpose, methods and application of NLP, and is consequently flawed from start to finish.

Further, there is a study currently underway in the US, using NLP with returned service people to clear entrenched PTSD. They are achieving the dramatic results we have come to expect, however they have had to remove all mention of the origins of the treatment because whenever “NLP” appeared on the funding applications, any Psychologists who had any sway in the funding would turn it down. Now there is no trace of the true modality of the work relating to this study. This sort of thing makes it incredibly difficult for those of us wishing to promote the benefits of NLP in any area.

So finally I get to my point! NLP processes are so rapid (as a very small sample, clearing PTSD in an average of 5 x 1 hourly sessions, or a phobia in less than an hour) that I and others would be intrigued to know just what is happening in the brain. Can plasticity occur at an even faster rate than that posited in Norman Doidge’s wonderful book, The Brain That Changes Itself? The results we get from NLP work suggest just that.

We simply do not have the resources, expertise or backing to conduct studies on this, and my purpose in writing to you is twofold:

1. Simply to reach out and say “Yes! I get it! We must open our minds to new paradigms and persistently and consistently chip away at those whose belief structures (and in many cases, fears) prevent them from acknowledging the benefits of something ‘different’ to what they know.

2. Can you offer any advice on how a small group of people in a small country might do this in a more effective way?

I discovered your brain training programs from Doidge’s book and am keen to use them to increase my own acuity skills in my work, and to discover how some of my clients could be helped to engage parts of their brains that for a variety of reasons may be ‘underused’.

Thank you for your work.

In the early 1980s, from my teen to late 20s, I struggled academically. While high school wasn’t a problem, college was. I could not keep up and felt that I was in a continual mental fog.

But the one thing I had was a determination to succeed. So, long before I knew there was such as thing as Neuroplasticity, I ”naively” set out to find ways to become smarter. I took supplements, ran 5 miles a day, listen to motivational tapes, meditated, and read lots of books (especially biographies of successful business people).

In my late 20s and beyond, things started to click. I wrote and directed a play which combined live action and 3 video screens, long before digital media. It was performed and did well. I made a few films here and there. I started a business, which was really a pre-Internet version of Facebook. I 1997, I wrote a 600 page book on a mathematical algorithm for predicting the behavior of the financial markets…and I was given a $5000 advance by a publisher for it.

Then, in 1990s, held executive positions in several companies, one of which is fairly well known in the financial markets realm. Today, I own several successful business and I am a founding partner of a publicly traded company.

Did I just overcome some hormonal imbalance or did I actually become smarter? Or maybe a little of both? Either way, if you knew the person I was back then in the 70s and early 80s, I have no doubt that you realize that I am a different person today.

Today, I am an avid user of the BrainHQ because I believe I have already experienced some degree of Neuroplasticity early in my life.

The work you guys are doing is extremely important because I believe that, while BrainHQ is not about performing unrealistic miracles as we all have whatever genetic potential we’re born with, I do believe that, here and there, there might some folks who cross the same kind of borderline that I crossed.

That’s an interesting web site – thanks for the link!

Those two exercises are very challenging because they are what we called “continuous performance exercises” – they do require not only fast and accurate cognitive processing, but fast and accurate physical responses – you have to click quickly! They are really meant for advanced users. If you’re keeping up, then great – keep at it! If you’re finding them too challenging, I would recommend focusing on core speed exercises like Hawk Eye or Auditory Sweeps that give you all the time you need to respond, while still challenging your fundamental brain speed.

I agree – brain science is still a new science, and it’s important that we’re open to new ways of thinking. And of course, at the same time, we should maintain rigorous scientific standards so we know that we we’re doing works. Well-designed and well-executed initial studies are key to building momentum. In the US, I’d recommend partnering with a university based research group, NIH SBIR/STTR grants (I don’t know what the equivalent is in New Zealand), or finding a individual who can make a donation to support an initial study.